Build First, Filter After

Until recently, almost none of my 80+ ideas got built. Not because I lacked conviction. Because each one required hiring a developer.

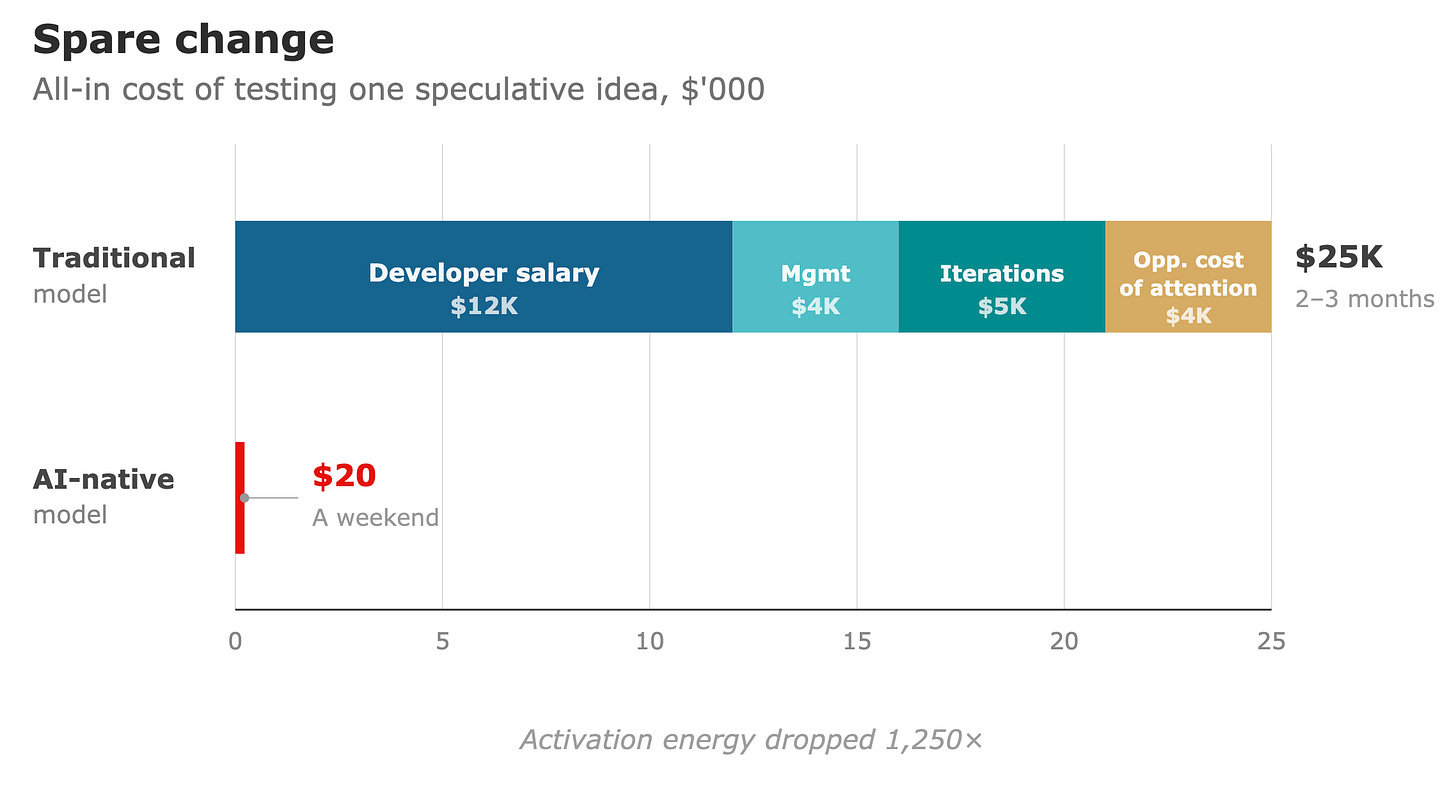

Even a junior engineer meant management overhead, iteration cycles, and a monthly check. The all-in cost of testing a single idea was somewhere between $5K and $15K, plus months of my fragmented attention. And most of my ideas don’t solve problems worth $15K to test. An automation that sends me stock prices in Telegram? A dashboard combining data from two fitness wearables? A tool that recognizes my handwriting? Real itches — but not $15K itches.

So the ideas accumulated in Notion. Products, automations, marketplaces, content projects, dashboards. Waiting for a resource allocation that would never make rational sense.

Then the cost structure changed, and I stopped filtering.

The cost of code approached zero. The cost of building a product didn’t. You still need to understand what to build and why, plan the architecture, handle deployment, manage the edges that break in practice. Most people underestimate the DevOps and the planning. Code was never the whole cost — it was just the part that required someone else.

But removing that dependency changed the economics completely. It eliminated the management overhead, the scheduling, the iteration lag, and the minimum viable commitment. The activation energy dropped from “justify a hire” to “write a clear brief.”

My workflow now: I describe an idea to Claude and ask it to challenge me — poke holes, stress-test assumptions. If the idea survives (many don’t, which is the point), I ask for research on the space. Then: what infrastructure does this need? What knowledge base? Claude asks me the questions I should have asked myself, then builds the project files — the spec, the instructions, the scaffolding — ready for execution. The building happens asynchronously. While I’m on a call about market expansion or weekly business review, the experiment assembles itself in a parallel tab. A third form of leverage, alongside hiring people and deploying capital, that didn’t exist three years ago.

I built an automation that sends me daily stock performance updates straight to Telegram. The thesis: I’m too lazy to open brokerage apps, but I check Telegram every hour, so push the information to where I already am. Reasonable assumption. Wrong — I don’t even check the notifications most of the time. The alert lands, I glance at it, I keep scrolling.

I also pushed Readwise highlights to Telegram. Same thesis — meet me where I already am. Same result. Then I tried other things through Telegram. Same pattern.

It took three experiments to realize the problem was never the channel. Passive notifications don’t change my behavior. Period. No amount of thinking would have surfaced this — I needed to build the same wrong thing three times to see what was actually wrong.

I built recurring search automations — flights to specific destinations, prices for specific products. These run on schedules and notify me only when results match criteria. No more manually searching, remembering to search, comparing across sessions. Set parameters once, forget about it. Genuinely useful. These survived.

A health dashboard pulling WHOOP and Garmin data — works, but I overstated the value. An OCR model for my handwriting — pure self-indulgence, still iterating, still enjoying it.

I thought I needed a weekly calendar audit — something to review my time allocation and flag imbalances. Built it. Realized I remember my weeks perfectly well. Weekly is noise. What I actually need is a six-month audit, a zoom-out I can’t do from memory. The idea was right. The cadence was wrong. Only execution revealed which parameter mattered.

I started building a prompt optimization tool, convinced that better prompts were the highest-leverage skill I could sharpen. Midway through, I realized I was already good enough at prompts. The real leverage was context engineering — how I structured and fed information to AI, not how I phrased requests. I was solving the wrong problem, and I couldn’t have known that without building toward the wrong solution first.

The pattern: none of these, right or wrong, would have survived the old filter. The problems they solve are real but small. When the cost drops to small chunks of my own time, the threshold for “worth trying” approaches zero. Some turn out wrong. Some turn out right. The point is that I couldn’t predict which in advance — and more importantly, the wrong ones taught me things the right ones didn’t.

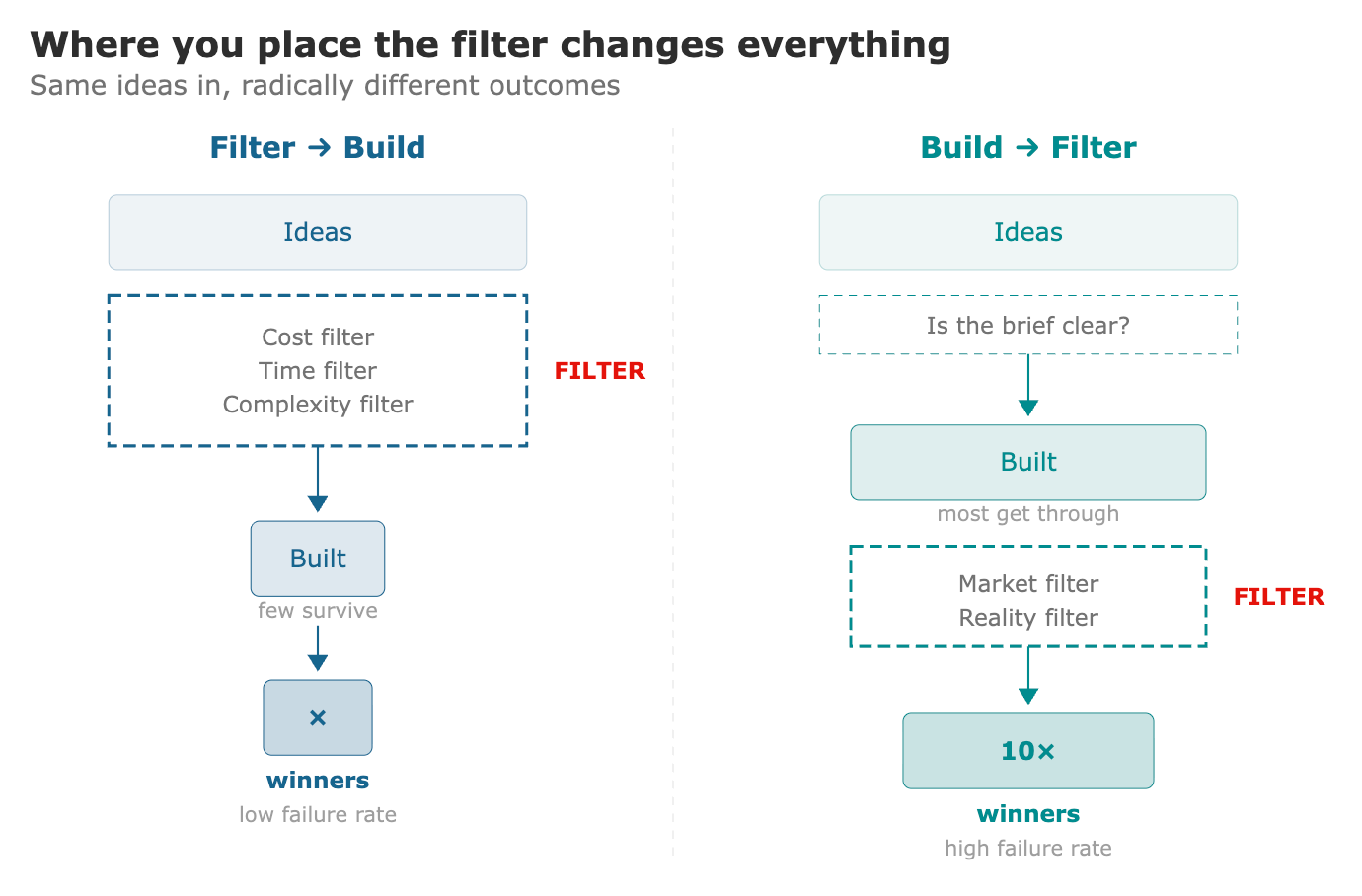

This is where most commentary about AI-built products gets the interpretation backwards.

The standard take: AI unleashed a flood of garbage. Millions of low-quality products nobody asked for. The failure rate is higher than ever.

The percentage is right. The interpretation is wrong.

When you move filtering from before the build to after it, of course the failure rate goes up. You’re testing more hypotheses. More fail. That’s not a crisis — that’s the scientific method applied to product development.

But the “flood of garbage” narrative misses something: the absolute number of things that work is also growing. Not because more good ideas suddenly exist, but because ideas that were previously uneconomical to test now get tested — and some turn out to be right.

My stock alerts don’t work as expected. My calendar audit needed a completely different cadence. My prompt builder was solving the wrong problem. But my search automations are genuinely useful. The work automations I’ve built — document review scoring, research pipelines, sales pipeline analysis — save real hours every week. Under the old economics, none of these get built. The useful ones die alongside the useless ones in the same theoretical filter, because the filter can’t tell them apart. Only reality can.

The denominator exploded — more things built, more things fail. The numerator is growing too — more things finding their audience, even if that audience is small, niche, or just one person. Most people staring at the failure rate miss the numerator entirely.

Venture capital has operated this way for decades. A typical fund invests in 25 to 30 companies expecting most to fail. The discipline isn’t picking winners in advance — it’s managing after deployment. Recognizing signal. Doubling down on what works. Cutting without sentiment.

For decades, only institutional money could run this model. Individuals got one shot, maybe two. Every failed project landed as a personal verdict because the stakes were so high.

Now individuals can run the same emotional architecture. Not at VC scale — you can’t run thirty experiments in parallel with a full-time job. But you can run two or three and cycle fast. When you expect most experiments to produce nothing, each dead one becomes information rather than defeat.

The compounding shows up in unexpected places. I’m not an engineer. Terminal commands, API tokens, integration patterns — none of this was natural for me. But each project, including the failures, made the next one slightly less foreign. The health dashboard taught me API integrations I reused for work automations. The Telegram bots taught me notification architecture. Even the dead prompt builder taught me how to scope a project correctly. Six months in, I’m building in days what took me weeks when I started — not because AI got better, but because I did.

At work, the same logic applies at higher stakes. The heuristic I use with my team: if you can explain it to an intern — clearly enough that they’d get it right without you checking — it’s ready for AI. Not rocket science. But these small automations compound, and the muscle for building them compounds faster.

For most of professional history, you had two ways to get more done than your own time allowed: hire people or invest capital. Both require real commitment. The threshold for “worth doing” was set by these costs, and most ideas stayed theoretical.

Now there’s a third option. Deploy AI on speculative projects asynchronously, at near-zero marginal cost, while your actual job continues. Building is no longer the bottleneck. Knowing what to build, reaching the right people, having the judgment to kill your own bad ideas — that’s where scarcity moved.

But here’s what I didn’t expect: the biggest return isn’t any single product. It’s what happened to my confidence in untested reasoning. I spent years developing careful opinions about what would work. I never tested most of them. Now that I have, I know how often the reasoning was wrong — not in the big thesis, but in the parameters, the assumptions about my own behavior, the second-order effects that only surface on contact with reality.

I don’t trust my hypotheses less. I just hold them differently — as things to test rather than things to refine. 80+ ideas in Notion. Most will turn out wrong. The ones that fail will teach me more than the ones I never started.

Loved it! 100% resonates with me